Snowflake’s innovative architecture features micro-partitions as a cornerstone of its data storage and retrieval capabilities. These micro-partitions are pivotal for optimizing performance and cost-effectiveness across the platform. This in-depth guide explores the intricacies of Snowflake’s micro-partitions, offering advanced insights into their functionality, management, and optimization.

Understanding Micro-Partitions in Snowflake

Snowflake’s unique architecture is one of its most defining characteristics, offering unparalleled data management and analysis capabilities. Central to its innovative design is the concept of micro-partitions, which are fundamental to how Snowflake stores, retrieves, and processes data. This blog post delves into the world of micro-partitions in Snowflake, exploring their architecture, functionality, and the significant benefits they offer.

What are Micro-Partitions?

At the heart of Snowflake’s storage architecture are micro-partitions, typically sized between 50 MB and 500 MB of uncompressed data. These snowflake micro partitions are fundamental to how Snowflake stores, retrieves, and processes data efficiently.

When data is loaded into Snowflake, it is automatically compressed and stored in these micro-partitions. Each partition is columnar, meaning that data is stored vertically, which optimizes both storage efficiency and query performance.

The Role of Micro-Partitions in Snowflake’s Architecture

Efficient Data Storage and Compression

Snowflake’s ability to handle massive datasets with ease is largely due to the efficient way it handles data storage. By automatically compressing data as it is loaded, Snowflake ensures that storage space is used optimally. The use of columnar storage within each micro-partition enhances this effect by reducing the amount of data that needs to be loaded and scanned for query operations, thereby improving performance and reducing costs.

Metadata-Driven Data Management

Each micro-partition in Snowflake is equipped with rich metadata, which includes details such as the minimum and maximum values of each column, the number of rows, and other data characteristics. This metadata is crucial for optimizing data retrieval processes. It allows Snowflake’s query engine to quickly determine which partitions are relevant to a query and which can be ignored, a process known as partition pruning. This targeted data retrieval method significantly speeds up query performance by reducing the amount of data that needs to be scanned.

How Micro-Partitions Enhance Query Performance

The intelligent use of metadata allows Snowflake to perform what is known as “micro-partition pruning.” When a query is executed, Snowflake uses the metadata to quickly skip over any partitions that do not contain relevant data, thus minimizing the amount of data read and processed. This not only speeds up query times but also reduces the computational load, leading to cost savings on compute resources.

Optimizing Data with Clustering Keys

To partition Snowflake optimally, it is crucial to use clustering keys effectively. One of the powerful features of Snowflake is the ability to specify clustering keys, which guide the organization of data within micro-partitions. Clustering keys are essentially column(s) that Snowflake uses to co-locate related data within micro-partitions. By choosing effective clustering keys, typically columns that are often used in query filters, you can ensure that related data is stored close together. This proximity minimizes the number of micro-partitions that need to be scanned during queries, further enhancing performance.

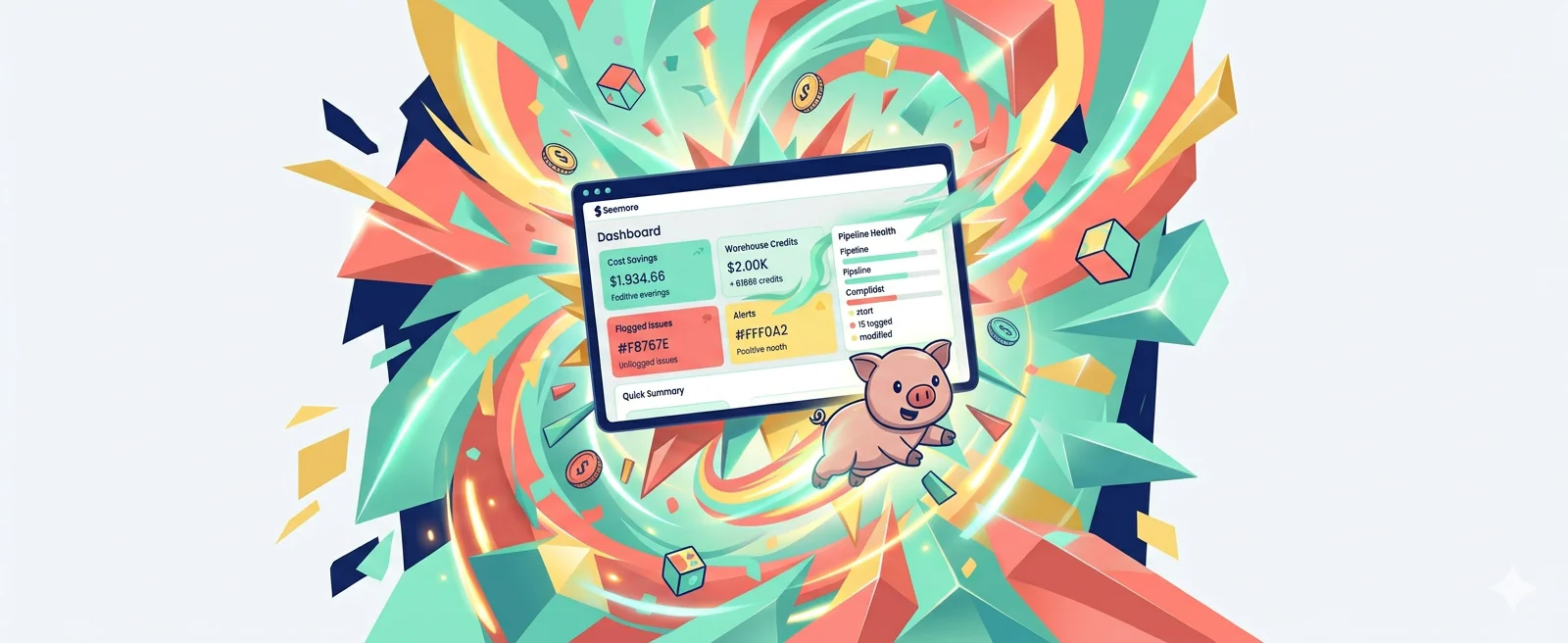

Snowflake Partitioning: Cost Implications of Micro-Partition Management

Efficient micro-partition management can lead to significant cost reductions. Since Snowflake charges for both storage and compute time, reducing the amount of data scanned through effective micro-partitioning can decrease both storage costs (due to better compression and less wasted space) and compute costs (due to quicker queries and less data processing). Regular reclustering and maintenance of micro-partitions ensure that as data volumes grow and query patterns change, the system remains optimized for cost and performance.

Best Practices for Managing Micro-Partitions

Effective management of micro-partitions is crucial for maximizing the performance and cost-efficiency of your Snowflake environment. Here are some best practices:

1. Optimize Data Clustering

Choose Effective Clustering Keys:

Select clustering keys that align with your most common query filters. This alignment ensures that related data is stored close together within micro-partitions, minimizing the number of partitions the query engine needs to scan and thus speeding up query performance.

Regular Reclustering:

Snowflake provides automatic reclustering, but it’s beneficial to monitor and manually recluster tables if you notice a decline in query performance. This helps maintain the logical ordering of data as new data is inserted or existing data is updated.

2. Implement Efficient Data Loading Strategies

Sort Data on Load:

Loading data in the order of your clustering keys can significantly improve the initial clustering effectiveness. This practice reduces the need for immediate reclustering and enhances query performance right from the start.

Use Bulk Loading:

Bulk loading data into Snowflake can help optimize how data is distributed across micro-partitions. It minimizes fragmentation and ensures that each partition is filled efficiently, reducing storage overhead and enhancing retrieval speeds.

3. Monitor Micro-Partition Performance

Utilize System-Generated Reports:

Snowflake offers system-generated reports that provide insights into the storage and performance characteristics of your micro-partitions. Regularly review these reports to understand partition efficiency and identify potential areas for optimization.

Analyze Query Execution Plans:

Regularly analyze the execution plans of your queries to see how well the micro-partitions are being pruned. If too many partitions are being scanned for frequent queries, it may be time to adjust your clustering strategy.

4. Manage Micro-Partition Size and Count

Adjust Micro-Partition Size:

While the size of micro-partitions is managed automatically, the amount of data you load and the manner in which you load it can influence partition sizes. Keep an eye on partition sizes and adjust your data loading processes if you notice sizes creeping outside the typical range.

Control Partition Count:

Excessive partition counts can lead to overhead in metadata management and can slow down query performance. If your table has a large number of small partitions, consider ways to consolidate data, such as adjusting your clustering keys or changing how data is loaded.

5. Advanced Partitioning Techniques

Explore Multi-Dimensional Clustering:

For complex datasets with multiple frequent query patterns, consider using multi-dimensional clustering if supported. This approach can further reduce the number of partitions scanned during queries.

Implement Materialized Views:

Materialized views in Snowflake can leverage micro-partitions to store pre-aggregated or frequently accessed query results. This can speed up access to commonly used data and reduce the load on the main tables.

Advanced Techniques for Micro-Partition Optimization

1. Strategic Use of Clustering Keys

Choosing the right clustering keys is crucial for arranging data within micro-partitions efficiently. For advanced users, consider these strategies:

Multi-Dimensional Clustering

If your queries commonly filter on multiple columns, configuring multi-dimensional clustering keys can dramatically reduce the number of micro-partitions scanned. This technique aligns the data more closely with complex query patterns, enhancing performance across varied workloads.

Dynamic Clustering Key Adjustment

Regularly review and adjust your clustering keys based on changing query patterns and data growth. This proactive approach helps maintain optimal data organization within micro-partitions, ensuring efficient data retrieval.

2. Custom Partition Sizing

While Snowflake automatically manages the size of micro-partitions, advanced users can influence partition behavior through data loading practices:

Optimized Data Loading

Load data in a manner that maximizes the efficiency of partition sizing. For instance, sorting data by clustering keys before loading can help maintain optimal partition sizes, reducing overhead and improving query performance.

Partition Splitting and Merging

Understand how Snowflake splits and merges micro-partitions as data is inserted or deleted. Advanced users can monitor these activities to ensure that partitions remain within an optimal size range, which can be crucial for maintaining performance in large-scale environments.

3. Effective Data Pruning

Leverage the metadata associated with micro-partitions to enhance data pruning strategies:

Custom Metadata Queries

Develop custom queries that utilize the metadata of micro-partitions to better understand data distribution and access patterns. This information can be used to fine-tune query design and improve partition pruning effectiveness.

Enhanced Query Filtering

Design queries with precise filter conditions that align closely with the min/max values stored in micro-partition metadata. This alignment ensures that the query engine can effectively skip irrelevant partitions, speeding up data retrieval.

4. Automated Partition Management

Automate the monitoring and management of micro-partitions to maintain optimal system performance:

Scheduled Reclustering

Implement scheduled reclustering processes that automatically adjust the clustering of data as it evolves. This ensures that data remains optimally organized for query access without manual intervention.

Monitoring Scripts

Develop scripts that continuously monitor the performance and efficiency of micro-partitions. These scripts can trigger alerts or automated processes to adjust system settings or reorganize data as needed.

5. Leveraging Materialized Views

Materialized views can be strategically used to optimize frequently executed queries:

Pre-Aggregated Data Storage

Store pre-aggregated data in materialized views that are strategically partitioned based on common query patterns. This reduces the load on the main tables and speeds up complex analytical queries.

Indexed Views on Partitions

Create indexed views that specifically target the most accessed micro-partitions, further enhancing query performance for critical workloads.

Conclusion: Harnessing the Full Power of Snowflake

Mastering micro-partitions in Snowflake involves a deep understanding of their architecture, strategic management practices, and continuous monitoring and optimization. By effectively leveraging these elements, organizations can harness the full power of Snowflake’s innovative technology, leading to enhanced performance, reduced costs, and more agile data operations. Implementing the outlined practices and continuously monitoring their impact ensures that Snowflake remains a robust and efficient platform for data management in today’s dynamic digital landscape.