Data FinOps

What Is Data FinOps?

Data FinOps describes the practice of controlling and managing spending across modern data platforms such as Snowflake, Databricks, BigQuery, and Redshift.

Data teams run queries, pipelines, dashboards, and AI workloads every day. Each action consumes compute, storage, or services that generate cost. Data FinOps introduces financial discipline into the data stack so teams understand where money goes and how engineering decisions influence spend.

And unlike traditional cloud FinOps that focuses on infrastructure, Data FinOps operates inside the data warehouse and analytics layer.

Teams track query costs, pipeline frequency, warehouse sizing, and user behavior so leaders can connect data usage with financial impact.

Why Data FinOps Matters

Data platforms scale quickly. Costs often grow faster than usage visibility.

Data engineers launch pipelines, analysts schedule dashboards, and BI tools run automated queries. Finance teams then receive a bill without knowing which workload generated it.

Data FinOps brings structure to that environment.

Organizations that adopt Data FinOps usually focus on several goals:

- Connect warehouse spend to teams, queries, or data assets

- Detect inefficient pipelines and unused data flows

- Identify queries that consume excessive compute

- Adjust warehouse sizing and scheduling behavior

- Create accountability for data usage across teams

And when teams understand the financial impact of their workloads, they make better architectural decisions.

Core Principles of Data FinOps

Strong Data FinOps practices rely on a few operational principles.

1. Cost Attribution

Cost attribution links spending to specific users, teams, queries, dashboards, or pipelines.

Without attribution, a data warehouse bill looks like a single number. Engineers cannot see which workloads consume most compute.

Attribution allows teams to answer practical questions such as:

- Which dashboard triggers expensive queries

- Which pipeline refresh frequency drives compute cost

- Which team generates the largest share of warehouse usage

And once teams identify expensive workloads, they can improve them.

2. Usage Visibility

Visibility across the data stack gives engineers insight into how workloads interact.

Queries trigger downstream jobs. Pipelines refresh tables. Dashboards schedule recurring workloads.

Data FinOps platforms map these relationships so teams understand which operations generate compute demand.

Visibility also helps teams detect patterns such as:

- dashboards refreshing too frequently

- pipelines loading unused data

- warehouses running oversized compute clusters

3. Cost-Aware Data Engineering

Engineering teams make architectural decisions every day.

Pipeline frequency, warehouse size, query structure, and caching behavior all influence cost.

Data FinOps encourages engineers to consider financial impact when designing pipelines or analytics models.

Teams often improve cost efficiency by:

- Adjusting job frequency

- Reducing unnecessary data refresh cycles

- Tuning queries

- Consolidating pipelines

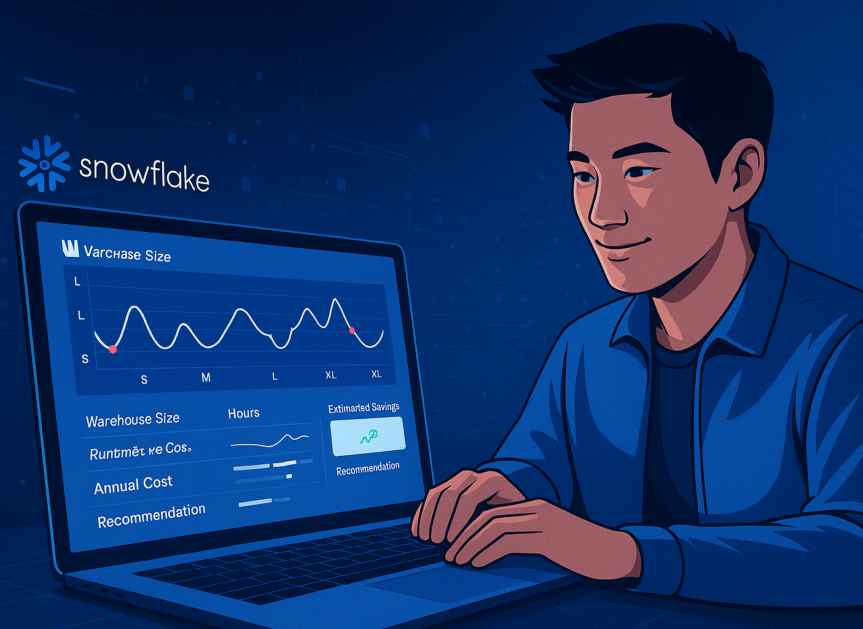

- Resizing warehouses

Data FinOps vs Cloud FinOps

Cloud FinOps manages infrastructure costs across services such as compute instances, storage, and networking.

Data FinOps focuses on analytics and warehouse workloads.

Both disciplines share similar goals, but the optimization layer differs.

| Category | Cloud FinOps | Data FinOps |

| Scope | Infrastructure services | Data warehouses and analytics workloads |

| Main users | Cloud engineers, platform teams | Data engineers, analytics engineers |

| Optimization focus | VM usage, storage tiers | Queries, pipelines, and warehouse size |

| Visibility challenge | Resource allocation | Query and pipeline attribution |

And as companies move more workloads into data warehouses, Data FinOps becomes a specialized discipline inside the broader FinOps strategy.

Common Data FinOps Challenges

Organizations often struggle with several operational barriers.

Lack of Cost Attribution

Many warehouses generate a single monthly bill without detailed workload mapping.

Teams cannot identify which pipelines or dashboards generate spend.

Hidden Query Costs

BI tools frequently trigger background queries.

Dashboards refreshing every few minutes may drive large compute usage without engineers noticing.

Pipeline Waste

Data pipelines sometimes run even when downstream tables or dashboards receive no usage.

Unused pipelines create unnecessary warehouse activity.

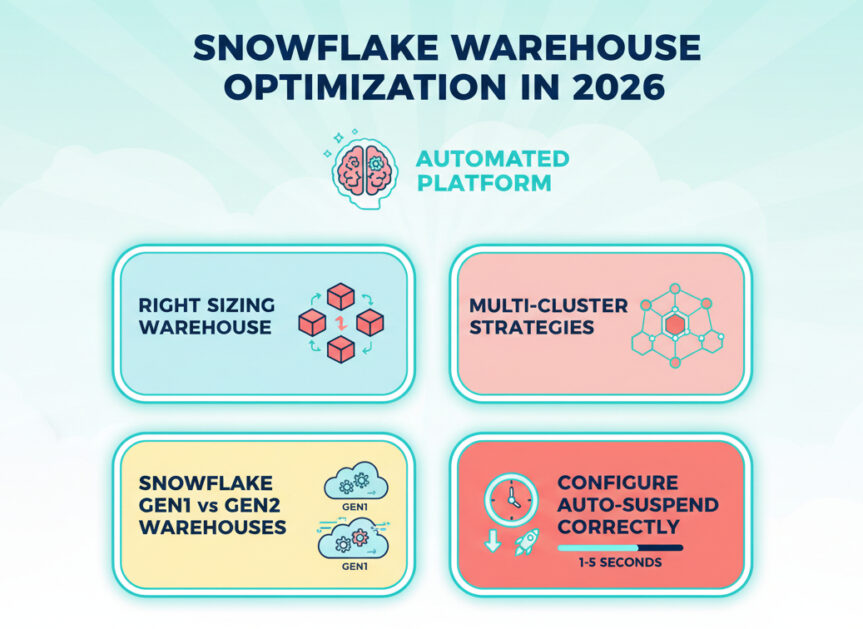

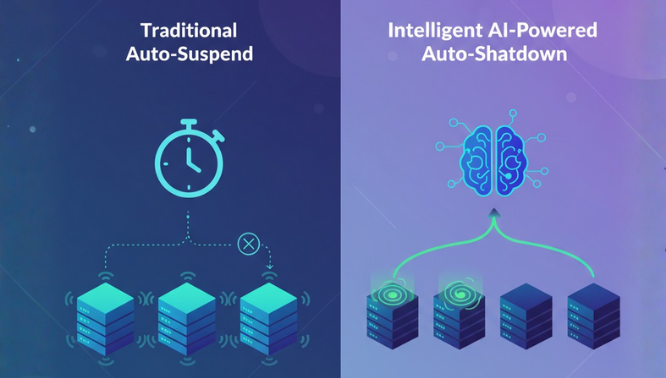

Warehouse Over-Provisioning

Data teams frequently oversize compute clusters to avoid performance issues.

Large warehouses complete queries slightly faster but consume significantly more credits.

Data FinOps introduces measurement so teams can balance performance and cost.

How Data Observability Supports Data FinOps

Data observability platforms help teams monitor pipelines, queries, and data assets.

But modern observability platforms also track financial impact.

Observability combined with cost attribution allows teams to:

- Identify expensive queries

- Map warehouse usage across teams

- Detect unused tables or pipelines

- Understand which workloads drive compute demand

And when observability includes financial context, teams move from reactive cost reviews to continuous optimization.

Data FinOps and Seemore

Seemore helps organizations implement Data FinOps by connecting warehouse activity, lineage, and cost attribution.

The platform analyzes workloads across Snowflake environments and shows:

- Which queries generate the highest spend

- Which pipelines refresh unused data

- How teams consume warehouse resources

- Where warehouse sizing wastes credits

Engineering teams gain the visibility required to adjust pipelines, tune queries, and manage warehouse usage more effectively.

And leadership teams gain a clear view of how data workloads translate into financial impact.

Final Thoughts

Modern data platforms give teams powerful analytics capabilities. Yet rapid growth in queries, pipelines, and dashboards often produces unexpected warehouse bills.

Data FinOps introduces operational discipline into the data layer.

Teams monitor usage, attribute cost to workloads, and adjust engineering decisions based on financial impact.

And when organizations combine Data FinOps practices with strong observability tools, they gain control over data spend while maintaining performance across their analytics environment.